Lesson 3 — Writing and Defending Your Business Case

Unit 4 | Lesson 3 of 3

By the end of this lesson, you will be able to:

- Write a complete AI Opportunity Business Case using the five-component structure

- Distinguish between strong and weak content in each component and apply that judgement to your own writing

- Use an AI assistant to improve the clarity and persuasiveness of your business case draft

- Prepare for the verbal defence at the 42-day gateway, including the specific questions your coach will probe

Bringing it all together

Over the last two lessons you have built the analytical foundations of your business case: a quantified value estimate, a comparative prioritisation using the Effort/Value matrix, and a structured feasibility check that names both the strengths of your opportunity and the risks that need managing. All of that analysis is now in service of a single output — a written document that makes the case for your project clearly enough that a reader who knows nothing about the process could understand it, and a coach who does know the programme can assess it.

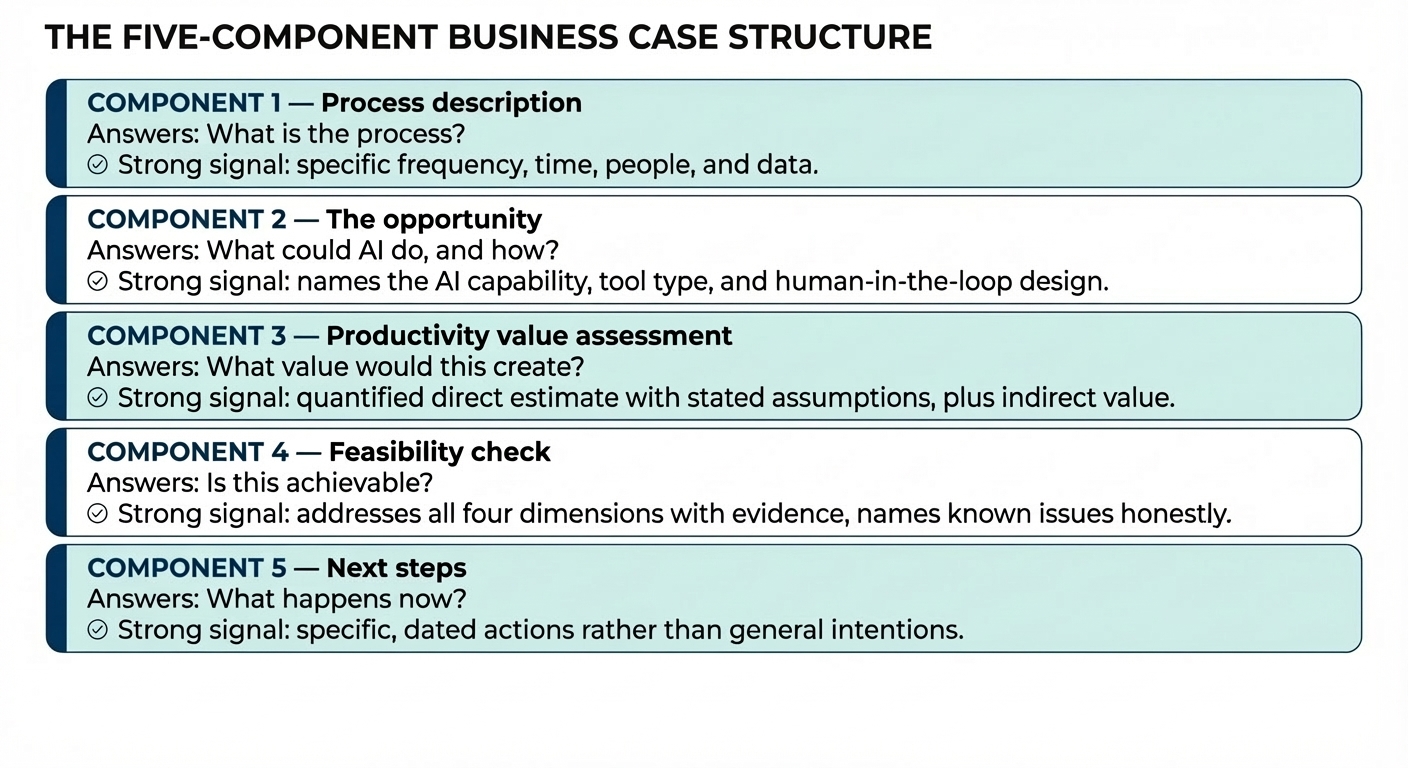

This lesson walks you through the five-component structure, shows you what strong and weak content looks like in each component, and then helps you prepare for the conversation that follows your written submission. The written document is important. The verbal defence is where the depth of your understanding actually becomes visible.

The five-component business case

Your business case should be approximately 600 to 900 words, structured across five components. It does not need to read like a corporate document — clear, specific, direct prose is more effective than formal language. Attach your workflow map from Unit 3 and include your suitability scoring summary as supporting evidence. Together, these three artefacts — the business case, the map, and the scoring — constitute your gateway submission.

Component 1: Process description

This component answers the question: what is the process, and what does it currently look like? It should name the process precisely, describe who performs it, how often it runs, how long it takes, what data is involved, and what the output is. A reader who has never seen your organisation should be able to visualise the process from this description alone.

The most common weakness here is vagueness — describing the process at a category level rather than a specific operational level. Compare these two examples.

Weak: "The process involves handling customer enquiries. It takes a significant amount of time each week and currently requires manual effort from multiple team members."

Strong: "The process handles approximately 60 new client onboarding enquiries per week, each arriving by email. A team member reads each email, classifies it into one of four categories (new account, account change, document request, or general query), drafts a response from one of twelve standard templates, and logs the response in the CRM. Average handling time is 18 minutes per enquiry. Three team members share this work, spending roughly four hours each per week on classification and response drafting."

The strong version gives the reader everything they need: frequency, input type, steps, time investment, staffing, and data system. The workflow map from Unit 3 provides the visual equivalent of this description — reference it explicitly in this component.

Component 2: the opportunity

This component answers: what specifically could AI do here, and why is that approach the right one? It should identify which AI capability maps to the process — classification, drafting, extraction, summarisation, or retrieval — and name the type of solution that is most appropriate: a workflow automation, an AI-assisted tool, or an agent-based approach. Refer back to the solution types you explored in Unit 2.

The most common weakness here is being generic — saying "AI could automate this process" without specifying how. Compare these two examples.

Weak: "AI could be used to automate the enquiry handling process and save time."

Strong: "A classification and drafting workflow could be built using a no-code platform such as Make.com combined with a large language model prompt. Incoming emails would be classified automatically into the four standard categories with a confidence score. High-confidence classifications (above 85%) would trigger an automatic template response for human review before sending. Low-confidence classifications would be routed directly to a team member with the AI's suggested category noted. This removes the classification and drafting step from most enquiries while keeping human judgement in the loop for ambiguous cases."

The strong version identifies the specific AI mechanism, the tool type, the confidence threshold as a design parameter, the human-in-the-loop design, and the exception path. A coach reading this can tell that the learner understands what they are proposing to build.

Component 3: productivity value assessment

This component answers: what value would successful implementation create? Present your direct value estimate from Lesson 1 in full, with all assumptions stated. Then add a paragraph on indirect value — the improvements to quality, consistency, staff experience, or customer experience that the estimate does not capture.

The most common weakness here is either no quantification at all ("this will save significant time") or false precision ("this will save exactly £14,237 per year"). Both undermine credibility in opposite directions. The right register is honest approximation: "approximately 300 hours of capacity released annually, based on an estimated 80% automation rate of the classification step."

Weak: "This automation will save the team a lot of time and reduce errors."

Strong: "At current volume, the classification and drafting process consumes approximately 12 staff hours per week across three team members. Automating the classification step for high-confidence enquiries — estimated at 75–80% of total volume based on the consistency of the five standard categories — would release around 9 hours per week, or approximately 414 hours over a 46-week working year. At an average all-in role cost of £22 per hour, this represents approximately £9,100 of capacity released annually for redeployment to higher-value client work. Indirectly, a consistent classification approach would reduce the current error rate in template selection — currently estimated at around 8% based on a manual audit — which generates an average of one to two rework instances per day."

Notice that the strong version states the 75–80% estimate and its basis, uses "approximately" throughout, converts to an annual figure, uses "capacity released" rather than "cost saved", and adds a specific indirect value claim with its own evidence.

Component 4: feasibility check

This component answers: is this achievable? Work through the four feasibility dimensions from Lesson 2 — data availability, process maturity, organisational readiness, and high-level risk awareness — in four short paragraphs. For each one, state your assessment and the evidence behind it. If a dimension has a known issue, name it and describe what needs to happen to resolve it.

Weak: "The data is available and the organisation supports this project. There are no significant risks."

Strong: "Data availability: the email inbox is accessible via the organisation's Microsoft 365 environment, which supports Make.com integration. Email content is unstructured but consistent enough in format and language for classification prompts to work reliably. One data readiness issue is that the CRM logging step currently requires a human to enter a free-text case summary — this will need to be templated or structured before automated logging is feasible. Process maturity: the classification categories have been stable for 18 months and are documented in the team handbook. Organisational readiness: the team leader has been briefed and has agreed to support a pilot. The IT team have confirmed that Make.com is approved for use with non-sensitive data. High-level risk flags: the main risk is that emails containing sensitive client information are not currently filtered before reaching the shared inbox — this data handling question will be addressed formally in the Module 2 governance work, but a pilot scope limited to a specific non-sensitive enquiry type can proceed in the meantime."

The strong version is honest about the CRM logging gap, specific about the IT approval, and names the data sensitivity risk explicitly — including the plan to address it — rather than pretending it does not exist.

Component 5: next steps

This component answers: what happens next? List the specific actions you need to take before Module 2 begins. This is not a project plan — it is a short, concrete list of immediate actions: conversations you need to have, information you need to gather, approvals you need to secure, and any technical questions you need to resolve. It signals to your coach that you are already in motion.

Weak: "I will continue to develop my understanding of AI and begin working on the project."

Strong: "Immediate next steps: (1) Confirm with the IT team that Make.com can be used with the specific email inbox — the existing approval covers non-sensitive data but the inbox classification needs verification. (2) Run a manual audit of 100 recent enquiry emails to validate the 75–80% high-confidence classification estimate before committing to it in the design. (3) Agree with the team leader on the pilot scope — starting with the 'document request' category only, as it has the most consistent inputs. (4) Complete the Module 2 pre-work on data governance before designing the CRM logging component."

Using AI to improve your draft

Once you have a complete first draft of all five components, AI-assisted editing is one of the most effective ways to improve it before submission. The goal here is not to have the AI rewrite your business case — it needs to reflect your thinking and your organisation's reality — but to use it as a precise editorial tool.

There are three specific prompts worth running on your draft.

The first is a specificity check: "Here is my business case draft: [paste]. For each of the five components, identify any claims that are vague, any places where a reader would want more specific evidence, and any sentences that could be made more precise without changing the meaning." This prompt reliably identifies the places where you have written a general statement where a specific one was possible.

The second is a challenge simulation: "You are a sceptical senior manager reviewing this business case. What are the three questions you would most want answered before approving this project? What would make you doubt the value estimate? What would make you question the feasibility?" The AI's simulated challenges are a preview of the questions your coach will ask at the gateway — and the ones your line manager may raise when you present to them.

The third is a scoping sanity check: "Based on this business case, does the proposed scope appear achievable within a six-to-eight-week build period for a practitioner who is learning as they go? What would you reduce or defer to make the scope more manageable if it currently feels too large?" This is particularly useful if you have been finding it difficult to bound the scope yourself.

Work through the AI's output critically. Accept the suggestions that sharpen your thinking and discard the ones that do not fit your context. Your submitted business case should sound like you — not like a language model.

Preparing for the verbal defence

Your written business case is submitted at least 48 hours before your coaching session. At the session, your coach will review it with you in a structured conversation. This is not a pass/fail examination — it is a professional dialogue about your project proposal. The coach's job is to test the depth of understanding behind the document, identify any gaps you need to address, and confirm that you are ready to progress to Module 2.

There are five questions you should be ready to answer fluently. Your written business case will have implied answers to all of them, but the coaching session asks you to defend those answers verbally, in real time, without looking at the document.

The first question is: why this process over the others you scored? Your answer should draw on your Effort/Value matrix — which candidates you considered, how you positioned them, and what the matrix revealed about relative priority. If you only scored one candidate seriously, explain what made the others less promising.

The second question is: what specific AI capability makes this automation viable? The answer should be precise: not "AI can handle this" but "a classification model prompted with the category definitions can consistently assign the correct category to 75–80% of enquiries based on the email subject line and first paragraph." Your understanding of the mechanism matters, not just the outcome.

The third question is: what would you do if the data access problem turns out to be harder than expected? Every business case has at least one dependency that has not been fully confirmed. Your coach will pick one and ask what your contingency is. Have a genuine answer ready — a reduced scope, an alternative data source, a manual interim step — rather than hoping the dependency resolves itself.

The fourth question is: what does a successful pilot look like twelve weeks from now? This is a scoping and ambition calibration question. A good answer describes a specific, observable output: "a working automation that handles the document request category of enquiries — currently around 15 per week — with a human review step before sending, and a log of classification accuracy over the first four weeks." A poor answer is vague about output and timeline.

The fifth question is: how will the people who currently do this process experience the change? This connects to K6 — the importance of designing AI systems that augment rather than replace human work. Your answer should show that you have thought about the change from the perspective of the people involved, not just the efficiency gain. Do they know about the project? Have they been involved in the process of identifying what to automate? What will they do with the capacity that is released?

Unit 4 — Complete

You have now done the full analytical work of Module 1: built your AI literacy, explored real automation demonstrations, identified and evaluated candidate processes, mapped the current state of your strongest opportunity, quantified its value, assessed its feasibility, and written a structured business case arguing for it. That is not a small body of work, and the artefacts you have produced — the workbooks, the workflow map, the suitability scoring, and the business case — are the evidence of it.

What you can now demonstrate

By completing this unit, you have worked towards the following Knowledge, Skills and Behaviours:

| KSB | Description | Where it appears in this unit |

|---|---|---|

| K5 | Methods to identify opportunities to enhance productivity — improving processes, reducing waste, increasing satisfaction, optimising outcomes | Lessons 1 and 2 — direct and indirect value, the Effort/Value matrix, feasibility checks; the business case value assessment component |

| K6 | The importance of designing AI and automation systems that augment rather than replace human work | Lesson 3 — the fifth gateway question (how will the people who do this work experience the change?); Component 2 (human-in-the-loop design) |

| K9 | AI and automation concepts, models and limitations. The impact adoption may have on workplace culture and wellbeing | Lesson 2 — organisational readiness, team resistance as a risk flag; Lesson 3 — naming the specific AI capability in Component 2 |

| S6 | Review and complete workflow and process mapping to identify problems or inefficiencies and recommend solutions | Lesson 3 — the business case references and builds on the Unit 3 workflow map; Component 1 draws directly on the current-state map |

| S13 | Identify opportunities to deliver automation. Support leaders in integrating ethical, empathetic approaches when decision-making | Lesson 2 — high-level risk awareness; Lesson 3 — gateway question 5 on people and change; the verbal defence |

| S14 | Support in the identification and evaluation of opportunities for increased productivity, including low/no-code tools and AI platforms | Lessons 1–3 throughout — the full business case production process |

Looking ahead to Module 2

The business case you have submitted is intentionally incomplete in one important respect: it has focused on value and feasibility while explicitly deferring the governance, legal, and ethical design questions to Module 2. That deferral was deliberate — those questions require a different analytical framework that the programme introduces properly in the next module.

In Module 2 you will apply a data governance lens to your chosen process, work through the responsible AI design considerations that apply to your specific automation type, understand the regulatory environment that affects how AI outputs can be used in your industry, and begin the technical design work that your business case has outlined but not yet specified. The opportunity you have identified and the groundwork you have laid in Unit 4 are the starting point for all of that work.

Level 4 AI & Automation Practitioner | Unit 4 — Lesson 3 of 3 | Version 1.0