Lesson 1 — Quantifying Value and Prioritising Your Opportunity

Unit 4 | Lesson 1 of 3

By the end of this lesson, you will be able to:

- Distinguish between direct and indirect productivity value and explain why both matter to senior decision-makers

- Produce a credible quantified value estimate for your chosen automation opportunity

- Use the Effort/Value matrix to compare multiple candidate processes and justify a selection

- Use an AI assistant to stress-test your value reasoning and surface assumptions you may have missed

From analysis to argument

In Unit 3, you did the diagnostic work: you identified candidate processes, scored them across five dimensions, and produced a current-state workflow map. You now know which process is your strongest candidate. The question Unit 4 asks is different — not "is this automatable?" but "is this worth doing, and can you prove it?"

That is a meaningful shift. The suitability framework assessed technical and organisational feasibility. The value assessment that opens this unit asks a commercial question: what does success actually deliver? A process can score highly on every suitability dimension and still not be worth automating if the value it creates is negligible, hard to measure, or invisible to the people who need to approve the project. Equally, a process with some feasibility challenges can be entirely worth pursuing if the value it unlocks is significant enough to justify the investment in resolving those challenges.

Your business case needs to make that argument. This lesson gives you the tools to make it well.

Direct and indirect value

Productivity value from automation comes in two forms, and understanding the distinction matters because senior decision-makers weight them differently.

Direct value is measurable and immediate. It shows up in numbers: hours saved per week, cost of errors avoided, volume of transactions processed without manual intervention, headcount capacity released for redeployment to higher-value work. Direct value is what you lead with in a business case, because it is the easiest to verify and the hardest to dismiss.

Indirect value is real but harder to quantify. It includes improvements to user or customer experience (faster response times, fewer mistakes reaching customers, more consistent outputs), reductions in staff frustration with repetitive low-value tasks, improvements to data quality that have downstream effects on decision-making, and strategic positioning — the organisation being seen to be using AI effectively in its operations. Senior leaders often care about indirect value as much as direct value, particularly when they are evaluating whether to invest in a pilot that might scale.

Neither type is more important than the other. A strong business case acknowledges both and is honest about which estimates are precise and which are directional.

💬 Reflection

Think about your chosen process. If it were automated successfully, who would benefit — and in what way? The person who currently does the task manually is the obvious answer, but think beyond them. Who receives the output of the process? Who makes decisions based on it? Who currently chases for updates because it runs slowly? Each of those stakeholders represents a potential indirect value claim.

How to build a credible value estimate

The most important word in the phrase "value estimate" is estimate. You are not expected to produce a financial model with four decimal places of precision. You are expected to produce a credible, specific, reasoned approximation that a sensible person could follow and challenge.

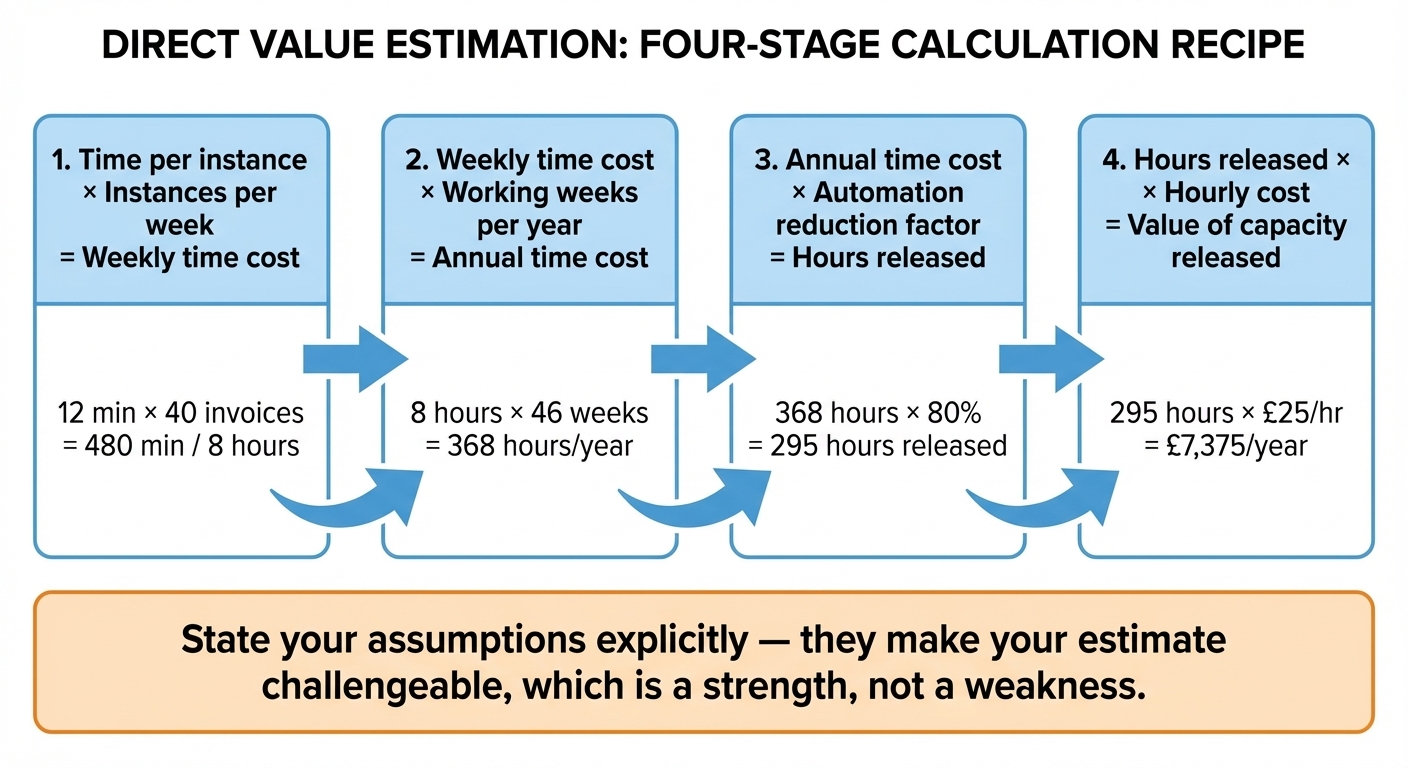

The structure for a direct value estimate is straightforward. Start with the current baseline: how long does one instance of this process take, how many instances occur per week or month, and how many people are involved? Multiply those together to get the total current time investment. Then apply a realistic reduction factor — the proportion of that time that automation could plausibly absorb — and calculate the released capacity. Convert that to hours per year, and if relevant, to an approximate cost using an average salary figure for the role involved.

A worked example makes this concrete. Suppose your process involves manually extracting data from approximately 40 supplier invoices per week, with each invoice taking around 12 minutes to process. That is 480 minutes — 8 hours — of manual work per week, across one person in the finance team. Over a working year of 46 weeks, that is approximately 368 hours. If an automated extraction pipeline handles 80% of invoices without manual intervention, it releases around 295 hours per year. At an average all-in cost of £25 per hour for the role, that is approximately £7,400 of capacity released annually — not saved, but available for redeployment to higher-value work.

Notice several things about that estimate. It uses round numbers and approximate figures throughout. It is explicit about the 80% assumption, which someone could challenge. It describes the value as capacity released rather than money saved, which is more honest. And it is specific enough that a line manager could recognise the process and evaluate whether the numbers feel right.

Validating and stress-testing your estimate with AI

Once you have built your initial value estimate, one of the most practical things you can do is ask an AI assistant to pressure-test it. Not to produce the estimate for you — the numbers must come from your knowledge of your own organisation — but to challenge the reasoning, surface assumptions you have not made explicit, and suggest value dimensions you may have overlooked.

A useful prompt for this looks like: "I have produced a value estimate for an automation opportunity at my organisation. Here is my reasoning: [paste your estimate]. Can you identify any assumptions I have made that are not explicitly stated, any value dimensions I may have missed, and any ways this estimate could be challenged by a sceptical senior stakeholder?"

The AI's response will not know your organisation, so some of its challenges will not be relevant. But the ones that are relevant are worth taking seriously. Common gaps that AI stress-testing surfaces include: failure to account for the time cost of exceptions (the 20% of invoices that do not get automated still need handling); failure to distinguish between capacity released and headcount reduced (the first is almost always achievable; the second rarely is without additional decisions); and failure to quantify the indirect value that the direct estimate makes invisible.

This is a genuinely useful practitioner skill — using AI not as a generator of answers but as a critical thinking partner. The business case you produce will be stronger for having been challenged before you submitted it.

The Effort/Value matrix

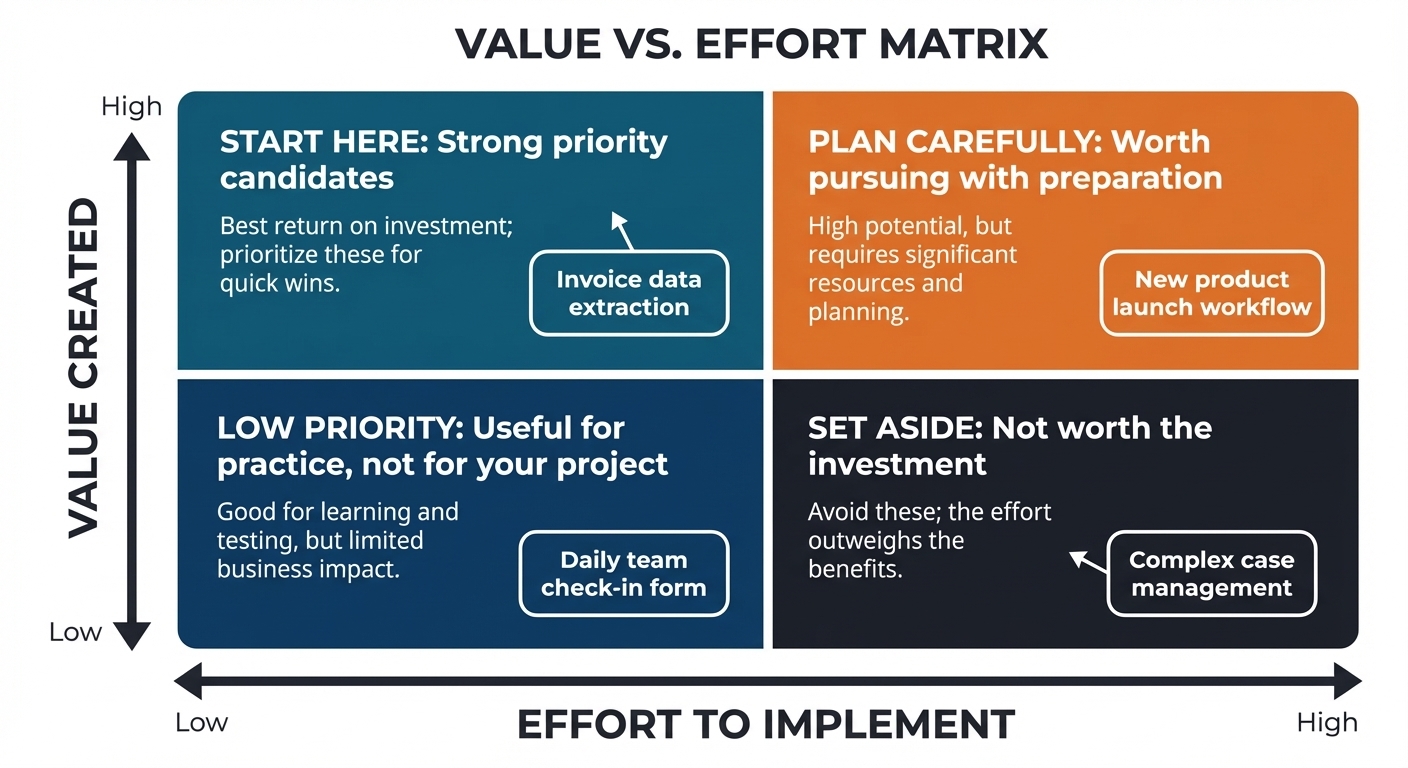

If you are entering Unit 4 with more than one strong candidate process from your Unit 3 scoring — or if you want to make a more rigorous case for why you chose the process you did — the Effort/Value matrix is the tool for that comparison.

The matrix plots candidate processes on two axes. The vertical axis represents the value the automation would create: a composite of the direct and indirect value you have estimated for each candidate, scored High, Medium, or Low relative to each other. The horizontal axis represents the effort required to implement: a composite of the technical complexity, data readiness challenges, and organisational friction you identified in your suitability scoring, again scored High, Medium, or Low.

The four quadrants that result each have a clear implication. Processes in the high-value, low-effort quadrant are your priority candidates — start here, build confidence, demonstrate results. Processes in the high-value, high-effort quadrant are worth pursuing but need more preparation, capability, or stakeholder alignment before they are ready. Processes in the low-value, low-effort quadrant are not priority projects — they might make good training exercises, but they should not consume your apprenticeship project slot. Processes in the low-value, high-effort quadrant are the ones to set aside, regardless of how technically interesting they might be.

The matrix is a communication tool as much as an analytical one. When you present your business case to your skills coach at the gateway, being able to show that you considered multiple candidates and made a reasoned comparative judgement — rather than simply advocating for the first process you thought of — demonstrates exactly the kind of independent analytical thinking the apprenticeship standard requires.

For the distinction-level criterion, your business case should show the matrix with at least two candidates positioned and a written paragraph explaining what the positioning reveals and why it supports your selection.

📝 Activity 1 — Value estimation and prioritisation

Complete before your coaching session | Estimated time: 60 minutes

Using the Value Estimation Worksheet in your Unit 4 Workbook, complete the following two tasks.

First, build your direct value estimate for your chosen process. Work through the four-stage calculation: time per instance, instances per week or month, annual time cost, automation reduction factor, and hours released. State every assumption explicitly. Then add a short paragraph — three to five sentences — on the indirect value the automation would create: improvements to quality, consistency, staff experience, or customer experience that the numbers do not capture.

Second, plot your Effort/Value matrix. If you scored more than one candidate process in Unit 3, position each one on the matrix and write a paragraph — four to six sentences — explaining what the positioning reveals and why it supports your selection. If you worked with only one candidate, position it on the matrix and explain how it scored on both axes using your Unit 3 suitability dimensions as evidence for the effort estimate.

Distinction-level criterion: Your Effort/Value matrix must include at least two candidates with written comparative analysis. A business case that shows you chose the best option from a considered shortlist is substantially stronger than one that advocates for a single candidate without comparison.

Bring your completed worksheet to your coaching session. Your coach will use it to check the robustness of your value reasoning before you write the full business case.

⏭️ Up next — Lesson 2: With your value estimate built and your priority process selected, Lesson 2 turns to the questions that will determine whether your chosen opportunity is genuinely achievable — and whether you have been honest with yourself about what could get in the way.