Lesson 1 — The Human Impact of Automation

Module 2, Unit 1 | Lesson 1 of 3

By the end of this lesson, you will be able to:

- Describe the spectrum of impact that AI and automation can have on roles and teams, from task displacement to role transformation to new role creation (K3)

- Explain why the distinction between automating a process and automating a person's job matters, and how to communicate that distinction to colleagues (K3)

- Identify the groups most likely to be affected by an automation initiative and least likely to have been consulted in its design (K3)

- Recognise the real-world consequences of failing to consider human impact in automation design, and identify the practitioner behaviours that help prevent them (K3, B1)

A different kind of question

You arrive at this unit having already done substantial analytical work. You have identified a real process in your organisation, scored it across suitability dimensions, mapped it in detail, and built a business case for why it is worth automating. You know what is possible. This module asks something different.

The new question is not technical. It is: what happens to the people whose working day includes that process when you automate it? What do they gain, what do they lose, and what do they fear — whether or not those fears are technically justified? Your responsibility, as the person designing the change, is to understand and respond to those questions before you build anything.

These are not soft questions that sit alongside the real work. They are the real work. AI projects that fail to engage with them do not just cause unnecessary anxiety for colleagues. They frequently fail outright — through resistance, disengagement, poor adoption, or the quiet continuation of the manual process running in parallel with the automated one because nobody trusts the output. Understanding the human side of automation is not an ethical nicety. It is a practical requirement.

The spectrum of impact

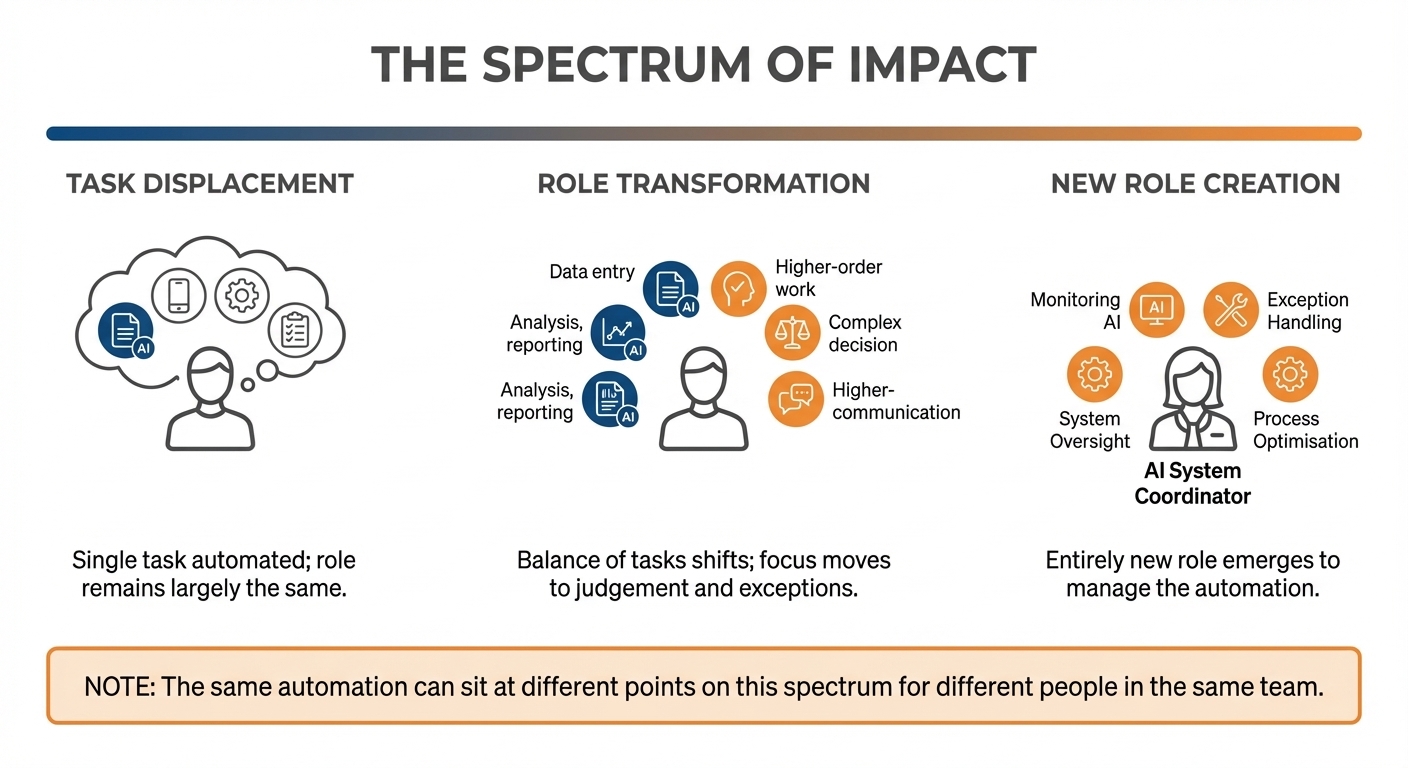

When people talk about AI's impact on work, the conversation often collapses into a binary: jobs are either safe or at risk. The reality is considerably more varied, and understanding that variation is essential to designing automation responsibly.

The spectrum of impact runs across three broad categories. They are not mutually exclusive — in a single team, different people may experience the same automation in three different ways at the same time.

Task displacement is the most targeted form of impact. A specific, bounded task within someone's role is taken over by an automated system, but the role itself remains largely intact. The person doing the job still exists, still has the same title, and still performs the majority of their work. What changes is one particular part of their day. If that task was one they found repetitive and low-value, displacement can be experienced positively. If it was a task that gave them a sense of craft or expertise — even a technically simple task — its loss can feel significant, regardless of how it looks from the outside.

Role transformation is broader. The overall nature of the role changes as AI absorbs a sufficient volume of tasks that what the person spends their time on looks materially different. A customer service agent who previously spent 70% of their time answering routine queries may find, post-automation, that routine queries are handled entirely by an AI assistant and their work is now focused on complex escalations, relationship management, and edge cases requiring judgement. This is not the elimination of their role. It is a different role with the same job title. Whether that transformation is experienced as enrichment or as deskilling — as an upgrade or as a loss — depends heavily on how it is designed, communicated, and supported.

New role creation is the category that receives the most attention in optimistic accounts of AI's impact on work, and the least scrutiny. New roles do emerge from automation. Someone needs to monitor the automated system, handle exceptions, maintain the prompts, and interpret the output. The honest question is whether the people whose tasks were displaced are the ones who end up in those new roles, or whether those roles require skills they do not currently have and may not be supported to develop.

💬 Reflection

Think about the process you are planning to automate. Which of these three categories best describes its likely impact on the person or people currently doing it? Is the whole role affected, or just one part of it? Importantly — is the person affected likely to experience that impact as positive, negative, or mixed?

There is no right answer. The point is to form a considered view before you speak to that person, not after.

The process and the person are not the same thing

One of the most important communication habits you can develop as an AI practitioner is the discipline of distinguishing between a process and the person who does it. These are different things, and conflating them — even in passing — creates unnecessary alarm.

When you say "I am automating the invoice processing workflow," you are describing a change to a process. When a colleague hears "my job is being automated," they are describing a threat to their livelihood and identity. The technical accuracy of the first statement does not prevent the second interpretation. In fact, in a workplace where AI is discussed in terms of efficiency savings and headcount implications — which is most workplaces — the second interpretation is often the more rational one.

This does not mean you should be evasive or avoid the subject. It means you should be precise and specific. "The data extraction step in invoice processing will be handled automatically — the part of your day that involves opening each PDF and typing the supplier name and amount into the system. What that releases is time for the review and exception-handling work, which still needs you." That is a different conversation from "I am automating invoice processing," even though both statements are technically accurate.

The practitioner's responsibility here is not to make promises you cannot keep. If the automation genuinely does reduce the need for a particular role at a particular headcount, that is a workforce planning question for leadership, not something for you to mask in reassuring language. The responsibility is to be specific about what is changing and what is not — rather than letting people fill that gap with assumptions that are often more alarming than the reality.

Who gets affected and who gets consulted

The people most likely to be affected by an automation initiative are frequently the people least likely to have been involved in designing it. This is a structural pattern across AI adoption in organisations, and it is worth being explicit about it.

Automation decisions tend to originate with managers, analysts, or technical practitioners who have the vocabulary and the organisational access to identify and propose them. The colleagues who perform the process being automated — often in operational, administrative, or frontline roles — are typically consulted late if at all. Their knowledge of the process, which is usually far more detailed than anyone else's, is treated as information to be extracted in a workshop rather than expertise to be included in the design process.

This matters for practical reasons as well as ethical ones. The people who do a process every day know things about it that do not appear in any documentation: the informal workarounds, the edge cases that occur every few weeks, the reason why a particular step that looks redundant actually serves a purpose nobody has written down. Building an automation without access to that knowledge produces a system that handles the documented process well and the actual process poorly.

The stakeholder conversation in this unit's activities is partly about professional behaviour and responsible practice. It is equally about making your automation better.

💬 Reflection

For your own project process: who performs it, and have they been involved in any of your project analysis so far? If you have been working primarily from your own knowledge of the process, what might you be missing? What would the person who does this daily know that you do not?

When it goes wrong — and when it goes right

Abstract principles about human impact are easier to absorb when they are grounded in real situations. The two cases below illustrate what happens when an AI deployment ignores the human dimension — and what a more considered approach can look like.

Amazon's AI recruiting tool (2018). Amazon built a machine learning system to screen CVs and identify strong candidates automatically, with the aim of reducing the manual burden on recruiters. The tool was trained on ten years of historical hiring data. The problem was that the data reflected a decade of hiring patterns in a male-dominated industry. The system learned to penalise CVs that included the word "women's" — as in "women's chess club" — and downgraded graduates of all-women's colleges. The tool was systematically disadvantaging female applicants based on patterns in historical data, not on any genuine assessment of candidate quality. Amazon scrapped it in 2018 without deploying it publicly.

The lesson here is not simply that AI can be biased — it is that the people whose application outcomes were affected had no visibility of or voice in the system's design. The design question "whose experience is shaped by this output, and have we considered them?" was not asked early enough. You will explore the legal dimensions of equality and discrimination in Unit 2. For now, the important point is that the human impact of this automation was significant, specific, and entirely preventable had it been considered from the outset.

Klarna's customer service automation (2024). The Swedish payments company Klarna publicly reported that its AI assistant was handling the equivalent workload of 700 full-time customer service agents within a month of deployment, with customer satisfaction scores matching those of human agents. Human agents remained in the workflow for complex queries, escalations, and cases requiring judgement or empathy. The automation absorbed high-volume, routine interactions while the human team focused on the work that required them most.

What made this a more considered deployment was not just the technical outcome — it was the design decision to retain human involvement for the cases where it mattered most, rather than pursuing full automation. The humans in the process were not an afterthought or a temporary measure pending further AI improvement. They were a deliberate part of the design.

These two cases sit at opposite ends of the same question: who did you think about when you designed this, and what happened to them? The practitioner's responsibility is to ask that question before building — and to design accordingly.

Sources

¹ Dastin, J. (2018, October 10). Amazon scraps secret AI recruiting tool that showed bias against women. Reuters. reuters.com

² Klarna. (2024, February 27). Klarna AI assistant handles two-thirds of customer service chats in its first month. Klarna Newsroom. klarna.com

💬 Reflection

Looking at the process you are planning to automate: whose experience will be shaped by its outputs — not just the person performing it, but the people receiving those outputs? Have any of those people been part of your analysis so far? If the answer is no, that is worth noting before you go further.

Automation bias: when humans stop thinking

One of the more counterintuitive risks of working with AI systems is not that people distrust them too much, but that they trust them too much.

🔑 Key term: Automation bias — the tendency for people to over-rely on automated systems, accepting their outputs without applying the judgement or verification they would apply to the same information from a human source.

Automation bias is well-documented across high-stakes domains — aviation, medicine, financial services — but it occurs in routine workplace settings too. When a well-designed AI system produces confident-looking, neatly formatted outputs at speed and at scale, it creates a strong implicit cue that the output is correct. Review steps that were designed to catch errors become rubber stamps. Colleagues who were supposed to verify outputs before they were acted on stop verifying them, because the system has never been wrong in living memory — until the day it is.

The practical implication for your design work is this: a human review step is only meaningful if the person conducting it has the information, time, and authority to actually challenge the output. A checkbox that says "manager approved" is not meaningful oversight if the manager is approving 50 outputs in five minutes without the underlying data in front of them. You will return to this in Lesson 2 when you design your human oversight checkpoints.

What transformation looks like in practice

Concrete examples help here. AI has changed the nature of work across a range of sectors without eliminating the roles involved.

In radiography, AI systems can now screen medical images for anomalies with high accuracy. Radiologists have not been replaced — their work has shifted towards reviewing AI-flagged cases, handling the edge cases the AI flags as uncertain, and applying clinical judgement to complex presentations. The volume of images they review has increased, but the nature of the review has changed.

In legal research, large language models can produce first-draft summaries of case law at a speed no paralegal can match. The paralegal's role has not gone — it has become one of directing the research, evaluating the output for accuracy, and identifying the gaps that the AI did not know to look for. The judgement work remains human. The data retrieval work does not.

In financial analysis, AI tools can generate variance reports and flag anomalies in datasets in seconds. Finance analysts increasingly spend their time interpreting those flags, communicating findings to stakeholders, and making the contextual judgements about which anomalies matter and which do not.

What these examples share is a pattern: the AI handles volume, pattern recognition, and retrieval at speed. The human handles uncertainty, context, accountability, and communication. Neither does the other's job better than the other. The skill in designing good human-AI collaboration is understanding precisely where that boundary runs in your specific process — and we turn to that in Lesson 2.

⏭️ Up next — Lesson 2: Having established what is at stake for the people affected by your automation, Lesson 2 examines how to design the relationship between human and AI in your workflow — including the three models of human supervision and what meaningful oversight actually requires.