Lesson 2 — Human-AI Collaboration and Oversight

Module 2, Unit 1 | Lesson 2 of 3

By the end of this lesson, you will be able to:

- Explain what human-AI collaboration means in practical terms and distinguish it from AI simply performing a task (K15)

- Describe the three supervision models — human-in-the-loop, human-on-the-loop, and human-out-of-the-loop — and identify when each is appropriate (K15)

- Evaluate what makes a human review step meaningful rather than nominal, and identify the conditions under which it can fail (K15)

- Identify the steps in your own workflow where human judgement adds most value and propose an initial set of oversight checkpoints (K15)

What collaboration actually means

The phrase "human-AI collaboration" is used widely, but it is worth being precise about what it means in practice — because the imprecise version creates confusion that leads to poorly designed systems.

Collaboration does not mean humans and AI doing the same thing together, in the way two colleagues might write a report jointly. It means humans and AI doing different things that complement each other, with each handling the part of the work it is better suited to. The AI handles volume, speed, consistency, and pattern recognition at scale. The human handles uncertainty, context, accountability, communication, and judgement in cases where the right answer is not deterministic.

The reason this distinction matters is that it changes how you design the workflow. If you think of the AI as doing your job faster, you will design a system where the AI does everything and the human checks it afterwards — which often means the human does not really check it at all, because there is no clear mental frame for what they are looking for. If instead you think of the AI and the human as occupying different positions in the workflow — each doing what they do best — you design a system with genuine handoff points, clear criteria for when the human needs to engage, and meaningful things for the human to do when they do engage.

💬 Reflection

Look at your workflow map. Without changing anything yet, read through the steps and ask yourself: which of these steps involves recognising a pattern in structured data? Which involves making a judgement call where the right answer depends on context you hold but cannot easily write down? The first category is where AI adds most value. The second is where human involvement is most important. Where do the two types of step sit in your specific process?

The three supervision models

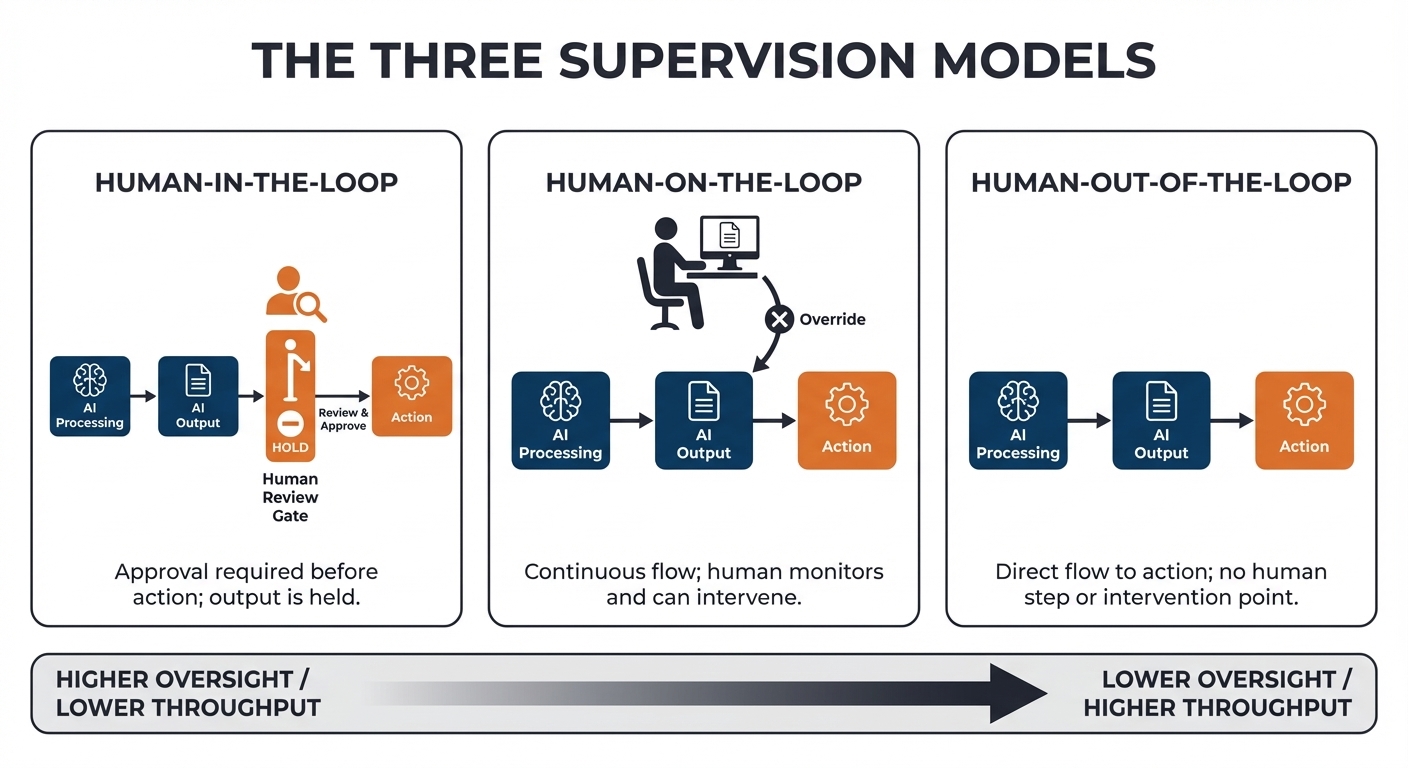

The design question that follows from collaboration thinking is: how much direct human involvement does a given automated step require? There is a spectrum of answers, and the field uses three named positions on that spectrum as reference points.

🔑 Key term: Human-in-the-loop — a supervision model in which a human reviews and approves each AI output before it is used or acted upon. The AI proposes; the human decides.

Human-in-the-loop is the most intensive form of oversight. The AI processes the input and produces an output — a classification, a draft, a recommendation — but that output is held until a human has reviewed it and confirmed it is acceptable. Nothing downstream happens until that confirmation is given. This model is appropriate where the consequence of an error is significant, where the output will be seen by customers or used in a high-stakes decision, or where the AI's confidence in its own output is variable and you cannot reliably filter for the cases where it is likely to be wrong.

The cost of human-in-the-loop is throughput. If a human must review every output, the speed advantage of automation is partially offset by the time that review takes. In practice, this trade-off is often worth making — particularly in early deployment, when the system is new and you do not yet have enough operational experience to know where it fails. Many well-designed automations begin as human-in-the-loop and gradually relax oversight as evidence accumulates that the system performs reliably on particular categories of input.

🔑 Key term: Human-on-the-loop — a supervision model in which the AI acts autonomously, but a human monitors the system's outputs and can intervene to override or correct them. The human is watching, not approving in advance.

Human-on-the-loop describes a system where the AI operates without waiting for human approval, but a human is monitoring its outputs and has the ability — and the genuine capacity — to intervene when something looks wrong. This is a more efficient model than human-in-the-loop, but it makes different demands. For it to work as intended, the monitoring task must be genuinely feasible: the human must have time to look, tools that surface anomalies clearly, and authority to stop or correct the system when they identify a problem. A human-on-the-loop arrangement where the monitor has 200 outputs to review in 30 minutes is not real oversight — it is the appearance of oversight.

Human-out-of-the-loop means the AI acts fully autonomously without live human involvement. This model can be entirely appropriate for low-stakes, high-volume, easily reversible actions where the cost of occasional errors is negligible. Automatically formatting a document, sorting emails into folders, or generating a summary that a human will read before acting on — these are plausible candidates. It is inappropriate where the action is irreversible, the consequence of error is significant, or the system is operating in a context it was not designed for.

What makes oversight meaningful

The three supervision models describe structures. The quality of oversight, however, depends on what happens inside those structures, and that is where many well-intentioned designs fall short.

A human review step is only meaningful if the person reviewing has three things: the information needed to evaluate the output, the time to do so properly, and the authority to reject or correct it. Remove any one of those three, and the review step becomes a nominal gate — something that looks like oversight but does not function as it. Consider each in turn.

- Information: if a reviewer sees the AI's output but not the input it was based on, they cannot verify whether the output makes sense. A document classification system that shows the assigned category but not the original document, or a customer response tool that presents the AI draft but not the original query, asks reviewers to approve outputs they cannot actually evaluate.

- Time: if a reviewer is expected to check 40 outputs per hour across a queue that never empties, the implicit message is that speed matters more than scrutiny. The review step will be conducted accordingly. Authority: if a reviewer has been told the system is accurate and any rejection will generate a support ticket and a conversation with a manager, the practical cost of using their judgement is high enough that most people will not use it.

Designing for meaningful oversight means actively addressing all three conditions in the workflow design — not assuming that adding a review step solves the problem.

💬 Reflection

Look at the human oversight steps you imagined for your workflow in Lesson 1's reflection. For each one: does the reviewer have the full input visible, not just the output? Is the expected review time realistic given the volume? Is there a genuine mechanism for them to reject or correct an output, or does the system treat rejection as an error state?

The collaboration sweet spot

In most workflows, not every step requires the same level of human involvement. The skilled practitioner maps the workflow and identifies, for each step, where human judgement adds distinct value — not where it adds reassurance or creates the appearance of control, but where it genuinely does something the AI cannot do as well.

Human judgement tends to add the most value at three types of point. The first is exception handling: the cases that fall outside the pattern the AI was designed for, where the right response requires contextual knowledge that was not in the training data or the prompt. The second is high-stakes decisions: the outputs that will be acted upon in ways that are consequential, irreversible, or visible to people outside the team. The third is quality calibration: periodic sampling of outputs to check whether the system is drifting from the standard it was designed to maintain — catching gradual deterioration that might not be visible in any single output but becomes apparent in the pattern.

Steps where human involvement adds least distinctive value are typically the ones involving routine data handling: extracting fields from a structured form, classifying an input into one of a small number of defined categories, generating a first draft of a templated communication. These are the steps where the AI can operate with low oversight once the system has been validated, and where human review may add more friction than value after a reasonable operational period.

Two ways of working together

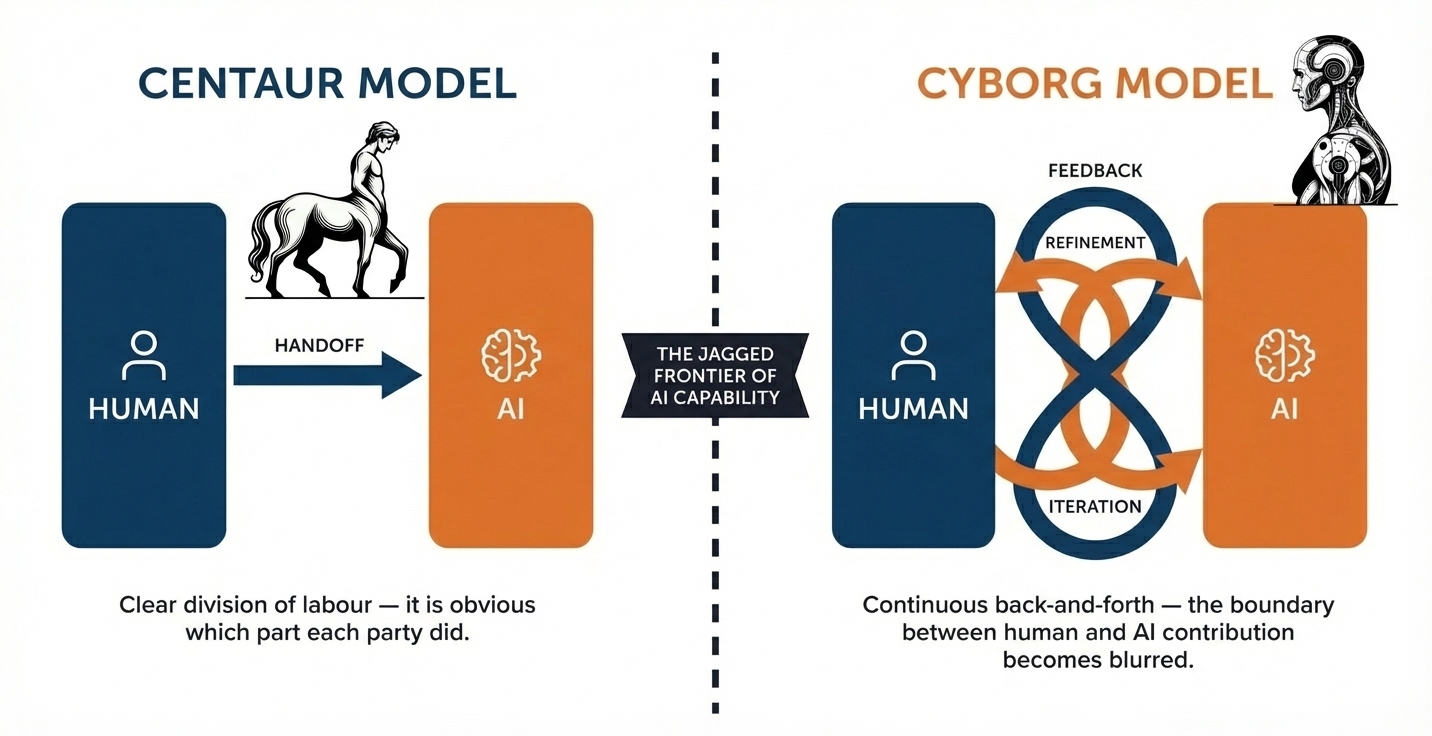

Within the broad frame of human-AI collaboration, practitioners and researchers have identified two recognisably different working patterns. The terms were coined by chess grandmaster Garry Kasparov and later adopted by Harvard Business School researchers studying how people navigate the capabilities and limits of generative AI. Understanding them can help you identify which fits your context — and why.

Centaur v.s. Cyborg

The first pattern is the centaur model, named after the mythological half-human, half-horse. In centaur collaboration, humans and AI each own distinct subtasks, with a clear division of labour between them. The human identifies what needs doing and directs the work; the AI handles the defined portion assigned to it; the human takes over for the steps requiring judgement, relationship, or contextual knowledge. In retrospect, it is clear which part was done by the human and which by the AI. This model works well when you have a reasonable understanding of what the AI can do reliably — because that confidence is what allows you to hand off a task and trust the output without extensive back-and-forth.

Typical centaur-style tasks look like this:

- A human writes a text; the AI translates it

- A human drafts content; the AI edits or summarises it

- The AI suggests a structure or outline; the human fills it with content and judgement

- A human provides data; the AI generates a first-pass report

The interaction tends to be short: input goes in, output comes out, and the human continues working with the result.

The second pattern is the cyborg model, where human and AI work in a continuous, iterative back-and-forth rather than taking turns at discrete tasks. The human is always involved; the AI is always present as an active participant. The interaction moves back and forth across what researchers call the "jagged frontier" of AI capability — the uneven boundary between what AI does reliably and where human judgement is still essential. In a cyborg workflow, it is often difficult, if not impossible, to say afterwards which part the human wrote and which the AI produced.

Typical cyborg-style tasks look like this:

- Collaborative creative writing, where the human steers and reshapes as they go

- Developing a problem statement step by step, with the AI asking questions and the human refining answers

- Structuring and tailoring a complex document — such as interview questions for a specific candidate — through iterative prompting

- Any workflow where the human is interrogating the AI, following up on its responses, and steering it toward a better outcome in real time

Neither pattern is inherently better. The right choice depends on the step, not the person. In practice, most real workflows contain both — some steps benefit from clean centaur delegation, others from cyborg-style continuous collaboration. A useful example: in a candidate screening process, an HR employee reading through applicants is well-served by a centaur approach — AI generates summaries, human reviews them. But a hiring manager preparing for a video interview benefits from a cyborg approach — working back and forth with AI to tailor questions, identify follow-up areas, and sharpen their thinking for that specific candidate.

The useful question to ask of each step in your workflow map is: do I want to hand this off and get a result back, or do I want to stay in the loop throughout?

💬 Reflection

Looking at your workflow map, are there steps where a clean centaur handoff makes sense — where you can clearly define what the AI should produce and take over from there? Are there other steps where you would want the AI alongside you throughout, rather than working separately? Identifying which pattern applies where is a useful first step in designing the human side of your automation.

Source

Zwingmann, T. (2024, March 22). Centaurs vs. Cyborgs. Profitable AI. blog.tobiaszwingmann.com

📝 Activity 1 — Human-AI Collaboration Reflection

Estimated time: 30 minutes Complete before your next 1:1 — bring your annotated map

Return to your workflow map. Looking at it with fresh eyes after this lesson's content, mark on the map: (a) the steps where you currently imagine the AI doing the work, and (b) the steps where you currently imagine a human doing the work.

Then ask yourself: at each AI step, what would happen if the output were wrong? Is there a human review point before the output is used? If not, is that acceptable — and why? Write three to four sentences for each AI step in your workflow explaining your thinking.

You will revisit and refine these annotations later in the programme when you build your full responsible design layer. For now, the goal is a considered first pass that you can discuss with your coach.

The following two articles explore human-AI collaboration in more depth, including the centaur and cyborg models introduced in this lesson, real-world implementation examples across sectors, and practical guidance on designing effective collaboration. They are written for a practitioner audience and are directly relevant to the design work you are doing in this unit.

-

Liminary (2025). Human-AI collaboration: finding the sweet spot (Part I). https://liminary.io/blog/human-ai-collaboration-finding-the-sweet-spot-part-1

-

Liminary (2025). Human-AI collaboration: finding the sweet spot (Part II). https://liminary.io/blog/human-ai-collaboration-finding-the-sweet-spot-part-ii

⏭️ Up next — Lesson 3: With the principles of human oversight established and your initial checkpoints identified, Lesson 3 turns to wellbeing, sustainable practice, and the stakeholder conversation that grounds all of this in your actual workplace.