Lesson 2 — Employment Law, Equality, and Responsible Automation

Module 2, Unit 2 | Lesson 2 of 3

By the end of this lesson, you will be able to:

- Explain the employment law considerations that arise when AI automation changes the nature of a job role, and identify when consultation obligations may apply

- Describe how the Equality Act 2010 applies to AI systems, and explain why a technically neutral algorithm can still produce discriminatory outcomes

- Identify the legal and ethical limits of using AI to monitor employee behaviour, and explain what lawful monitoring requirements

- Describe the UK's approach to responsible AI regulation and the sector-specific guidance that applies in common professional contexts

When AI changes the job

It is tempting to think of employment law as a separate matter from AI design — something that HR deals with while practitioners build the technical solution. That separation does not hold up in practice.

When an AI system materially changes the nature of a job role — the tasks performed, the skills required, the way performance is measured, or the level of autonomy a person exercises — it may trigger employment law obligations that the organisation must address before deployment, not after.

Change to terms and conditions — if an automation changes what a person's job actually requires them to do substantially enough that it amounts to a change to their employment contract, that change must be agreed. Imposing it unilaterally can constitute a breach of contract.

Redundancy — if an automation removes the need for a role entirely, or reduces the number of roles required, UK redundancy law applies. Specifically, if 20 or more redundancies are proposed within 90 days, a period of collective consultation with employee representatives is required under the Trade Union and Labour Relations (Consolidation) Act 1992. But even individual redundancies must follow a fair process.

Consultation obligations — even where redundancy is not the direct outcome, organisations have obligations under the Information and Consultation of Employees Regulations 2004 to inform and consult employees about significant changes that affect their employment, including the introduction of AI systems that materially change how work is organised.

The implication is not that you need to manage a redundancy process. It is that when your automation proposal would substantially change what people do — not just a minor efficiency, but a material shift in role — you should flag this to the relevant stakeholders in your organisation before the project moves to implementation. The flag protects both the affected employees and the organisation.

💬 Reflection

Looking at your own automation project: does it substantially change what the person currently doing this process would do with their working day? Is it a task displacement, a role transformation, or something that removes the need for the role entirely? (You explored this framing in Unit 1.) If the answer is role transformation or beyond, who in your organisation would need to be informed before deployment?

Key References:

- ACAS guidance on redundancy: https://www.acas.org.uk/redundancy

- ICR 2004 — Information and Consultation Regulations: https://www.legislation.gov.uk/uksi/2004/3426/contents

The Equality Act 2010 and AI

The Equality Act 2010 prohibits discrimination on the basis of nine protected characteristics: age, disability, gender reassignment, marriage and civil partnership, pregnancy and maternity, race, religion or belief, sex, and sexual orientation. The Act covers direct discrimination (treating someone less favourably because of a protected characteristic) and indirect discrimination (applying a policy or practice that disadvantages people with a protected characteristic, even if applied equally on the surface).

Direct vs. indirect discrimination

| Direct discrimination | Indirect discrimination | |

|---|---|---|

| Definition | Treating someone less favourably because of a protected characteristic | Applying a neutral rule or practice that puts a protected group at a particular disadvantage |

| Intent required? | No — intent is irrelevant; the treatment itself is the issue | No — disproportionate impact is enough, regardless of intent |

| AI example | An algorithm explicitly filters out candidates over 50 | An algorithm trained on historical data replicates past biases, disadvantaging women or ethnic minorities without any explicit characteristic as input |

| The legal test | Was this person treated worse because of who they are? | Does this practice produce a worse outcome for a protected group, and can it be objectively justified? |

| Can it be justified? | No — direct discrimination cannot be justified (with very limited exceptions) | Yes — if it is a proportionate means of achieving a legitimate aim |

AI systems are not automatically exempt from the Equality Act because they are automated. An algorithm that produces outputs that disproportionately disadvantage people with a protected characteristic has potentially caused indirect discrimination — even if no one intended that outcome and even if the protected characteristic is not an explicit input to the model.

How this happens in practice

Training data is the most common route to unintended discrimination. If an AI model is trained on historical data that reflects past discriminatory practices — historical hiring decisions, historical loan approvals, historical performance ratings — it learns to replicate those patterns. The model has no awareness that it is doing so. It is optimising for predictive accuracy on the training data, and the training data embeds the historical inequality.

The Amazon AI recruiting example from Lesson 1 of this unit illustrates this directly: the model penalised CVs containing the word "women's" because historical hiring data contained fewer successful female candidates in that industry. The algorithm was technically neutral. The outcome was discriminatory.

Did you know?

Amazon began developing this AI recruiting tool in 2014 and quietly scrapped it in 2018 after discovering the bias problem. The tool had been trained on CVs submitted over a ten-year period — most of which came from men, reflecting the historic gender imbalance in the tech industry. The model taught itself that male candidates were preferable. Amazon's engineers tried to adjust the system to remove the bias, but could not guarantee it would not find other proxies for gender. The story became one of the most widely cited examples of algorithmic bias in recruitment — and a key reason why AI hiring tools are now subject to specific regulatory scrutiny in several jurisdictions.

Other examples in common automation use cases:

- Sentiment analysis tools trained predominantly on data from one demographic group may systematically misclassify expressions from other groups.

- Performance monitoring systems that penalise patterns of behaviour (response times, active hours, communication frequency) may disadvantage employees with disabilities, caring responsibilities, or religious observance patterns.

- Customer routing or pricing algorithms trained on postcode or location data may produce outcomes that correlate with race or deprivation without any explicit racial input.

The test under the Equality Act is not whether discrimination was intended. It is whether a protected group was put at a disadvantage — and whether that disadvantage can be objectively justified.

Key References:

- Equality Act 2010: https://www.legislation.gov.uk/ukpga/2010/15/contents

- Equality and Human Rights Commission guidance on indirect discrimination: https://www.equalityhumanrights.com/equality/equality-act-2010/what-is-discrimination

- ACAS on equality in the workplace: https://www.acas.org.uk/discrimination-and-the-law

Monitoring and surveillance at work

AI-enabled monitoring of employees — tracking productivity, analysing communications, measuring time on task, monitoring keystrokes or mouse movements — is a growing area of AI adoption that carries significant legal and ethical risk.

The ICO has been clear that employee monitoring must have a lawful basis under UK GDPR, must be proportionate to the stated purpose, and must be transparent: employees must be told what is being monitored and why. Covert monitoring is only permissible in very narrow circumstances, such as where there is a reasonable suspicion of serious wrongdoing and disclosure would prejudice the investigation.

Beyond data protection, surveillance-level monitoring may implicate the Human Rights Act 1998, specifically Article 8 (the right to respect for private and family life), which applies in employment contexts.

From a practical standpoint, the legal risks of AI monitoring are compounded by the equality risks. Productivity monitoring systems that flag employees as underperforming based on time-on-task metrics may disproportionately affect employees with disabilities (whose working patterns may legitimately differ), employees with caring responsibilities, or employees working across time zones.

The practitioner's responsibility is to recognise when a proposed automation crosses from workflow management into monitoring — and to ensure that the legal and HR implications are assessed before the system is designed, not after it has been running for three months.

Key References:

- ICO guidance on monitoring workers: https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/employment/monitoring-workers/

- Human Rights Act 1998: https://www.legislation.gov.uk/ukpga/1998/42/contents

The UK's approach to responsible AI regulation

The UK does not yet have a single overarching AI Act equivalent to the EU's AI Act. Instead, the UK government has taken a principles-based, sector-led approach: establishing high-level principles that all AI systems should meet, while leaving sector-specific regulators to determine how those principles apply in their domains.

The five cross-sectoral principles for responsible AI in the UK are:

- Safety, security, and robustness — AI systems should function as intended and be resilient to attack or misuse.

- Appropriate transparency and explainability — users and affected people should be able to understand AI outputs to the extent necessary for the context.

- Fairness — AI systems should not discriminate unfairly against individuals or groups.

- Accountability and governance — clear lines of responsibility should exist for AI system outcomes.

- Contestability and redress — there should be mechanisms for people to challenge AI decisions and seek remedy.

These principles are not legislation — but they are increasingly embedded in sector-specific regulatory expectations. Practitioners working in regulated sectors should understand the guidance from their relevant regulator.

Financial services — the FCA and PRA have published expectations on AI governance, model risk management, and explainability. The FCA's AI and Machine Learning Guidance (2022) is directly relevant.

- FCA AI guidance: https://www.fca.org.uk/publications/multi-firm-reviews/findings-review-firms-approaches-artificial-intelligence

Healthcare — NHS England and the MHRA have published frameworks for AI as a medical device and for AI-assisted clinical decision support.

- NHSX AI frameworks: https://transform.england.nhs.uk/ai-lab/ai-lab-programmes/regulating-the-ai-ecosystem/

Public sector — the Cabinet Office's Algorithmic Transparency Recording Standard requires public sector bodies to publish details of algorithmic systems that assist significant decisions.

- Algorithmic Transparency Standard: https://www.gov.uk/government/collections/algorithmic-transparency-recording-standard

Cross-sector — the ICO and the Alan Turing Institute have published the AI and Data Protection Risk Toolkit, which provides practical guidance on assessing data protection risk across the AI lifecycle.

- ICO and Turing AI Risk Toolkit: https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/artificial-intelligence/guidance-on-ai-and-data-protection/

💬 Reflection

What sector does your organisation operate in? Does a sector-specific regulator have published guidance on AI use in your area? If you are uncertain, the ICO's guidance hub is a good starting point for any sector that processes personal data. Checking whether your sector has specific AI or algorithmic guidance is one of the items in your Legal Compliance Checklist in the unit activities.

The EU AI Act (Regulation (EU) 2024/1689) entered into force on 1 August 2024. It is the world's first comprehensive horizontal AI regulation — meaning it applies across sectors and use cases, rather than being limited to a specific industry. It matters to practitioners outside the EU as well as within it, because its scope is determined by where AI outputs are used, not where the organisation building or deploying the AI is based.

Who it applies to

The EU AI Act applies to:

- AI providers placing systems on the EU market, regardless of where those providers are established

- AI deployers using AI systems within the EU

- Importers and distributors of AI systems in the EU

- Organisations outside the EU whose AI outputs are used by people in the EU

If your organisation is UK-based but works with EU clients, delivers services to EU customers, or deploys AI that affects EU residents, the EU AI Act may apply to your system. This is a direct parallel to the jurisdictional logic of EU GDPR.

A risk-tiered approach

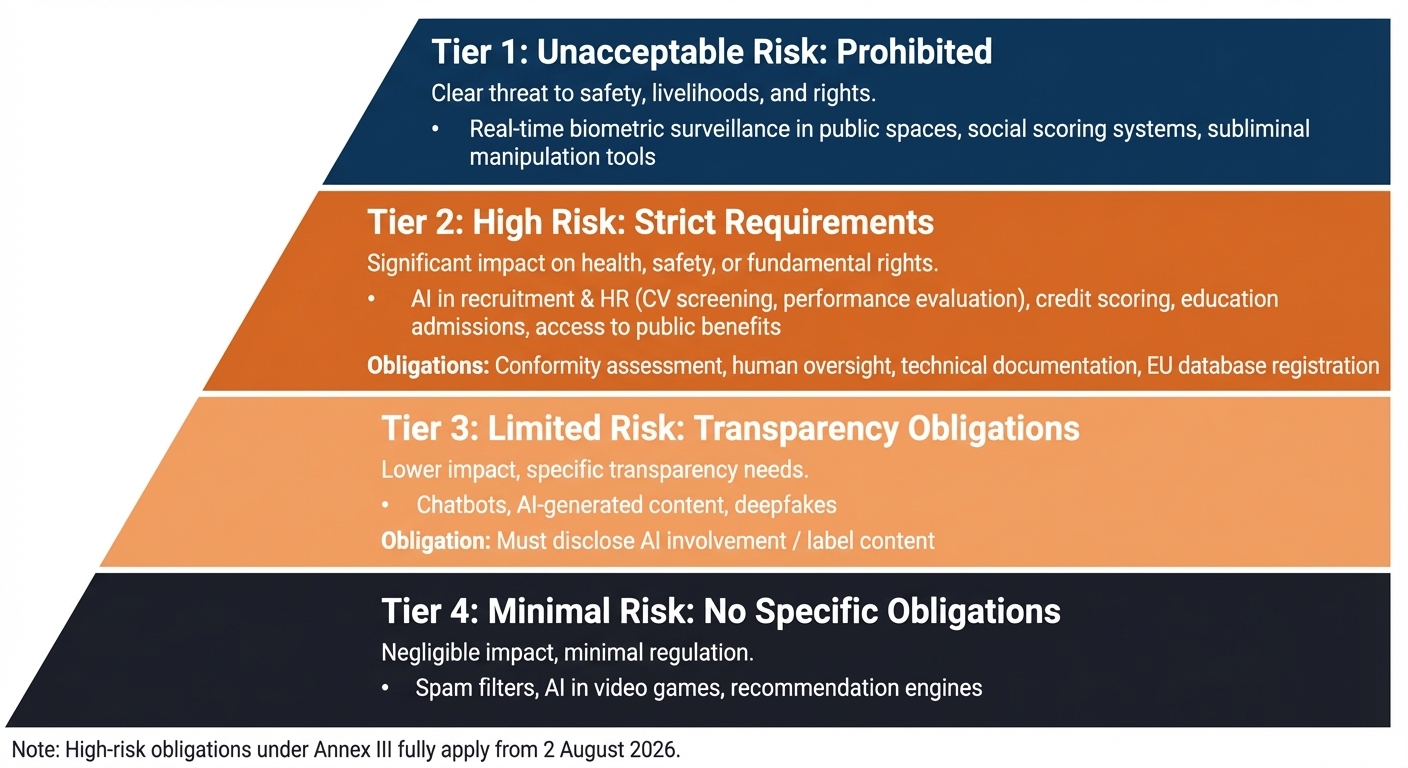

Unlike the UK's principles-based framework, the EU AI Act takes a legislative, risk-tiered approach. AI systems are categorised into four tiers, each with distinct obligations:

Unacceptable risk — prohibited. AI systems in this category are banned outright. Examples include real-time biometric surveillance in public spaces (with narrow exceptions), AI systems that manipulate people through subliminal techniques, and social scoring systems used by public authorities.

High risk — subject to strict requirements. This is the tier most directly relevant to AI automation practitioners. High-risk AI systems must meet obligations before they can be placed on the EU market or put into service. The high-risk categories that most commonly arise in organisational AI use include:

- Employment and HR: AI used for recruitment (CV screening, job matching), performance evaluation, task allocation, promotion or termination decisions, and monitoring during work

- Credit and financial services: AI used for creditworthiness assessment

- Education and vocational training: AI used for admissions, assessment, or monitoring

- Access to essential services: AI involved in decisions about public benefits, housing, or health

High-risk obligations include: conducting a conformity assessment, maintaining technical documentation, implementing human oversight mechanisms, ensuring accuracy and robustness, logging system operation, and registering the system in the EU's AI database.

Limited risk — transparency obligations. Chatbots and AI-generated content must disclose that they are AI. Deepfake content must be labelled.

Minimal risk — no specific obligations. The majority of AI applications fall here. Spam filters, AI in video games, and similar tools require no specific regulatory action.

What this means for your practice

If your organisation currently operates only in the UK, the EU AI Act does not apply — but the UK government is actively monitoring its implementation and has indicated it may introduce complementary legislation. Practitioners working across UK and EU markets, or in organisations with EU operations, need to assess both frameworks.

For AI automation projects in employment, recruitment, performance management, or customer credit — the most common practitioner use cases — the EU AI Act's high-risk classification is directly relevant. If your organisation operates in EU markets and your system falls into a high-risk category, the obligations are substantially more demanding than the UK's current principles-based approach, and they are legally enforceable.

Key References:

- EU AI Act full text: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689

- EU AI Act high-risk use cases (Annex III): https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689#anx_III

- Ada Lovelace Institute — EU AI Act explainer: https://www.adalovelaceinstitute.org/resource/eu-ai-act/

- UK DSIT AI regulatory approach: https://www.gov.uk/government/publications/ai-regulation-a-pro-innovation-approach

📝 Activity 1 continued — Section 3: Employment and Equality

Return to your Legal Compliance Checklist and complete this section now.

-

Does this automation materially change the nature of anyone's job role? If yes, describe the nature of the change — task displacement, role transformation, or reduction in headcount.

-

If headcount is affected, have the relevant HR or leadership stakeholders been made aware? Is collective consultation required under the Information and Consultation of Employees Regulations 2004?

-

Could the AI component produce outputs that disadvantage people with a protected characteristic — directly, or through proxy variables in the training data or input features? Describe any risk you can identify, even if you cannot fully resolve it at this stage.

-

Does your automation involve any monitoring of employee behaviour or communications? If yes, have the lawful basis, proportionality, and transparency requirements been considered?

-

Flag: What areas here require further investigation or input from HR, legal, or equality colleagues?

💡 Section 4 — Transparency and Accountability draws on Lesson 3 content. Complete it after you have worked through that lesson.

⏭️ Up next — Lesson 3: With the legal foundations in place, Lesson 3 turns to the ethical principles that apply across all of these areas — and introduces the unit's three activities, including the Legal Stress Test.