Lesson 2 — The Process Suitability Framework

Unit 3 | Lesson 2 of 3 | Estimated time:~30 minutes

By the end of this lesson, you will be able to:

- Apply the five-dimension Process Suitability Framework to evaluate candidate processes

- Write specific, evidence-based rationale for each dimension score rather than general impressions

- Distinguish between a high-potential candidate and one that needs further groundwork before automation is viable

- Explain why scoring across multiple dimensions produces better decisions than relying on a single criterion

Why a framework matters here

In Lesson 1, you developed the habit of describing work as processes rather than tasks. You should now have a mental longlist — processes you have noticed in your own role or team that involve repetition, structured inputs, and predictable outputs. The next question is which of those candidates are genuinely worth pursuing.

It is tempting to answer that question on instinct. "This one takes ages" or "everyone hates doing this" are real signals, but they are incomplete ones. A process that takes a long time might take a long time because it is genuinely complex and variable — and complexity and variability are exactly what makes automation difficult. A process that colleagues dislike might be disliked because it involves sensitive judgements that carry personal accountability — and removing that accountability from a human is not always appropriate, regardless of how unpleasant the task feels.

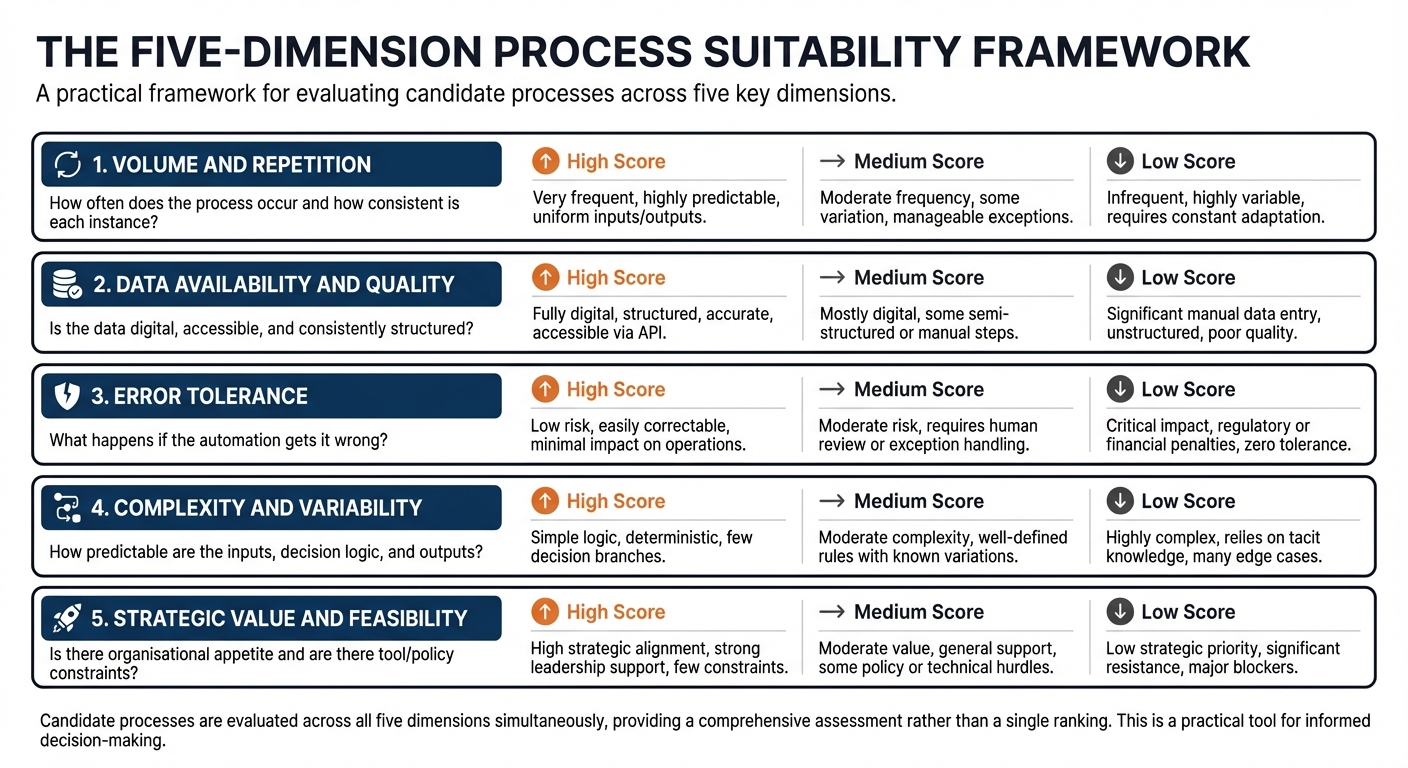

The Process Suitability Framework replaces instinct with structured analysis. It asks you to score each candidate process across five dimensions, writing specific rationale for each score. The point of doing this in writing is not bureaucracy. Written rationale forces precision in a way that mental scoring does not. When you have to explain why you scored a dimension High rather than Medium, you discover very quickly whether you actually know enough about the process to design an automation for it.

The five dimensions

Dimension 1 — Volume and repetition

The first question to ask about any process is how often it occurs and whether each instance is broadly similar in structure. Volume matters because the productivity benefit of automation scales with frequency: a process that happens twice a year may not justify the investment in building and maintaining an automation, even if it is technically straightforward. Repetition matters because automation depends on consistency. A process that occurs daily but looks different every time will require much more sophisticated design — often an AI agent rather than a structured workflow — and carries higher risk of errors at scale.

Strong evidence for a High score here includes a process that occurs daily or weekly, involves a clearly bounded set of input types, and produces a consistent category of output. A Medium score is appropriate when volume is moderate or when there is meaningful variation in inputs that will need to be handled. A Low score — which does not rule out automation but does raise the investment threshold significantly — applies to processes that are infrequent or highly variable.

Dimension 2 — Data availability and quality

Automation requires data. More specifically, it requires data that is digital, accessible, and consistently structured enough to be processed reliably. This dimension asks you to examine the data your candidate process depends on: where does it live, what format is it in, how consistently is it structured, and can an automated system reach it?

A process that runs on data already sitting in a CRM, a spreadsheet, or a structured form is in a strong position. A process that depends on information stored in paper documents, legacy systems with no API access, or in people's heads faces a data problem that will need solving before the automation problem can be addressed. Data quality matters too: if the inputs to a process are inconsistently formatted, missing fields, or of unreliable accuracy, the automation will inherit those problems and amplify them.

Dimension 3 — Error tolerance

Every automated system makes mistakes occasionally. This dimension asks what happens when it does. There is a significant difference between a process where errors are caught by a human reviewer before they cause any impact, and a process where an error propagates downstream — into a customer communication, a financial record, a compliance document — before anyone notices.

High error tolerance means a human review step naturally sits in the process, errors are visible and correctable before they matter, and the consequence of an occasional mistake is low. Low error tolerance — which does not mean you cannot automate but means you must design very carefully — applies to processes where errors have significant financial, legal, reputational, or safety consequences, or where the volume of output means errors will accumulate before they are detected.

💬 Reflection

Think about the candidate processes on your longlist. For each one, ask: what would happen if the automated system produced an incorrect output one time in twenty? Would a human catch it before it caused a problem? Or would it be embedded in a record, a report, or a communication that has already been acted upon?

Your honest answer to that question is your starting point for scoring Dimension 3.

Dimension 4 — Complexity and variability

This dimension examines how predictable the inputs and outputs of the process are. A process with low complexity and variability has a narrow, well-defined set of input types, a clear decision logic, and a consistent output format. Each instance may be different in content, but the structure is the same. A process with high complexity and variability involves inputs that differ significantly in type or format, decision logic that depends on context and judgement, or outputs that vary substantially in nature depending on what is found during processing.

It is worth being precise about the difference between content variation and structural variation. An invoice processing pipeline handles thousands of invoices that are all different in content — different suppliers, amounts, and payment terms — but structurally similar enough to process in the same way. That is low structural variability, and it is highly automatable. A regulatory advice process handles cases that differ not just in content but in the type of analysis required, the regulatory framework applicable, and the nature of the output. That is high structural variability, and it requires a much more sophisticated — and riskier — approach.

Dimension 5 — Strategic value and feasibility

The final dimension asks whether the organisation is ready and willing to change this process. Technical suitability is necessary but not sufficient: a process that scores well on the first four dimensions may still face significant obstacles in implementation. These obstacles include the absence of a tool that integrates with the relevant systems, data governance constraints that restrict how data can be processed, regulatory requirements that affect what can be automated, and — perhaps most commonly — insufficient organisational appetite to change a process that people are used to, even if it is inefficient.

Strategic value also asks whether this process matters enough to be worth the investment. Some processes are technically automatable but peripheral to the organisation's core activity. Prioritising those over high-volume, high-impact processes in a central function is a common mistake in early automation programmes. Score this dimension with both appetite and impact in mind.

Writing strong rationale

The framework only works if the rationale behind each score is specific. A score without rationale is an opinion. A score with precise, evidence-based rationale is the beginning of a design brief.

The difference looks like this. A weak score for Dimension 1 reads: "High — this process happens a lot." A strong score reads: "High — our team receives and processes approximately 35 new supplier registration forms per week. Each form has the same seven required fields. Volume is consistent throughout the year with a modest increase in January and September." The strong version gives someone enough information to begin thinking about what a solution might look like. The weak version does not.

A weak score for Dimension 3 reads: "Medium — errors could be a problem." A strong score reads: "Medium — completed registrations are reviewed by a team leader before they are activated in the system, which provides a natural human review gate. However, the review is currently cursory due to time pressure, so errors in the automated extraction would need to be visually obvious to be reliably caught." That level of specificity identifies both the mitigation already in place and a potential gap in it. That is the kind of analysis that shapes a well-designed solution.

📝 Activity 1 — Build your process longlist

Complete before your coaching session | Estimated time: 60 minutes

Using the Process Identification Worksheet in your Unit 3 Workbook, list every process in your role or team that you can identify. Aim for at least eight to ten. For each one, note: its name, how often it occurs, what you produce at the end of it, and your initial instinct on whether AI could help and why.

Once you have your longlist, select your three most promising candidates and apply the five-dimension framework to each one. Score every dimension High, Medium, or Low and write at least two to three sentences of specific rationale for each score. Refer back to the strong and weak rationale examples in this lesson if you are unsure what level of detail is expected.

Bring your completed longlist and scored assessments to your coaching session. Your coach will use them to help you identify which candidate to take forward into workflow mapping in Lesson 3.

Your rationale is portfolio evidence. Write as if someone who does not know your organisation will read it and need to understand the process clearly from your description alone.

⏭️ Up next — Lesson 3: With your candidate processes scored, the final step before your business case is producing a current-state workflow map of your most promising candidate. Lesson 3 covers what a workflow map is, how to build one, and why it is the foundation of everything that comes next.