Lesson 1 — The AI Landscape: What Is What?

Unit 1 | Lesson 1 of 4 Estimated time: ~30 minutes

By the end of this lesson, you will be able to:

- Describe the difference between traditional rule-based automation, Generative AI, and AI agents in plain language

- Explain why choosing the right type of automation for a task matters

- Define key terms you will use throughout this programme: LLM, hallucination, RAG, AI agent

Why vocabulary matters

Walk into any workplace conversation about AI and you will hear the same words used to mean completely different things. "Automation" gets applied to everything from a simple Excel macro to a self-directing AI pipeline. "AI" gets used for a spell checker and a large language model in the same sentence.

This imprecision is not just frustrating — it causes real problems. It leads organisations to choose the wrong tools, set the wrong expectations, and either over-invest in things that are not ready or dismiss opportunities that are genuinely valuable.

As an AI and Automation Practitioner, part of your value is being the person who can cut through the noise. That starts with a shared, precise vocabulary.

Traditional rule-based automation

Traditional automation follows instructions that humans write explicitly. If X happens, do Y. If the invoice amount is over £10,000, route it to the finance director. If the form field is blank, return an error. Every decision and every outcome is defined in advance.

This type of automation — often called RPA (Robotic Process Automation) or rule-based automation — is exceptionally good at tasks that are:

- High volume and repetitive

- Consistent in structure (same inputs, same format, every time)

- Clearly defined, with no ambiguity in the decision logic

- Low tolerance for variation or surprise

Think: copying data between systems, processing standard forms, sending automated notifications, running scheduled reports.

The critical limitation is equally clear: the moment inputs vary, the rules break. A PDF invoice formatted differently from usual. A customer query that does not fit a predefined category. A decision that requires judgement. Rule-based automation cannot interpret, infer, or adapt — it only does exactly what it was told.

📌 Key term — Robotic Process Automation (RPA): Software that mimics human actions on a computer interface (clicking, copying, pasting) to automate repetitive tasks. It follows fixed rules and cannot handle unexpected variation.

Machine Learning and Generative AI — the shift

Machine Learning (ML) introduced something fundamentally different: instead of following rules written by humans, a system learns patterns from data. Show it thousands of examples of fraudulent and legitimate transactions, and it learns to tell the difference — without a human having to write every rule.

Generative AI (GenAI) represents a further shift. Large Language Models (LLMs) — the technology behind tools like ChatGPT, Claude, Gemini, and Microsoft Copilot — are trained on vast amounts of text. What they learn to do is predict what text should come next, given a prompt.

This turns out to be remarkably powerful.

The critical difference from traditional automation is that GenAI works with unstructured data — natural language, images, audio, messy documents — and it can generalise across problems. You do not need to define every possible input in advance. You describe what you need in plain language and get a response.

This means GenAI can do things that rule-based automation simply cannot:

- Draft a response to a customer complaint it has never seen before

- Summarise a 40-page report into three key points

- Extract structured data from an inconsistently formatted document

- Translate internal jargon into plain English for a non-specialist audience

📌 Key term — Large Language Model (LLM): An AI model trained on enormous amounts of text that can understand and generate human language. It works by predicting the most useful next word or phrase given the context it has been provided.

📌 Key term — Hallucination: When a GenAI model generates information that sounds plausible and confident but is factually incorrect. The model does not know it is wrong — it has no way of knowing. This is one of the most important limitations to understand in practice, and we will return to it in Lesson 2.

💬 Reflection

Think about a task you do regularly at work that involves reading, writing, or interpreting information. Is the input always the same structure and format — or does it vary? How much judgement does it require?

Keep this task in mind as you work through the rest of this unit. You may find yourself returning to it.

AI Agents — the next step

A standard GenAI model responds to one prompt at a time. You ask, it answers. That is powerful, but it is still reactive — it waits for you.

AI agents go further. An agent can be given a goal — not just a question — and will take a sequence of steps to try to achieve it. It can use tools: searching the web, querying a database, running code, calling an API, triggering other automations. It can evaluate its own intermediate outputs and adjust its approach.

The difference in practice: asking a GenAI tool to draft an email is a single-turn interaction. Asking an AI agent to research three competitor products, summarise their pricing, draft a comparison table, and email it to your line manager is a multi-step goal that requires tool use, sequencing, and intermediate decision-making.

Agents are more powerful — and more complex. More steps means more places where errors can accumulate. More autonomy means more potential for unintended consequences. The human-in-the-loop principle, which runs through this entire programme, becomes even more critical when agents are involved.

📌 Key term — AI Agent: An AI system that can take sequences of actions to achieve a goal, using tools (search, code execution, API calls) and making intermediate decisions. It operates with more autonomy than a single-turn GenAI model.

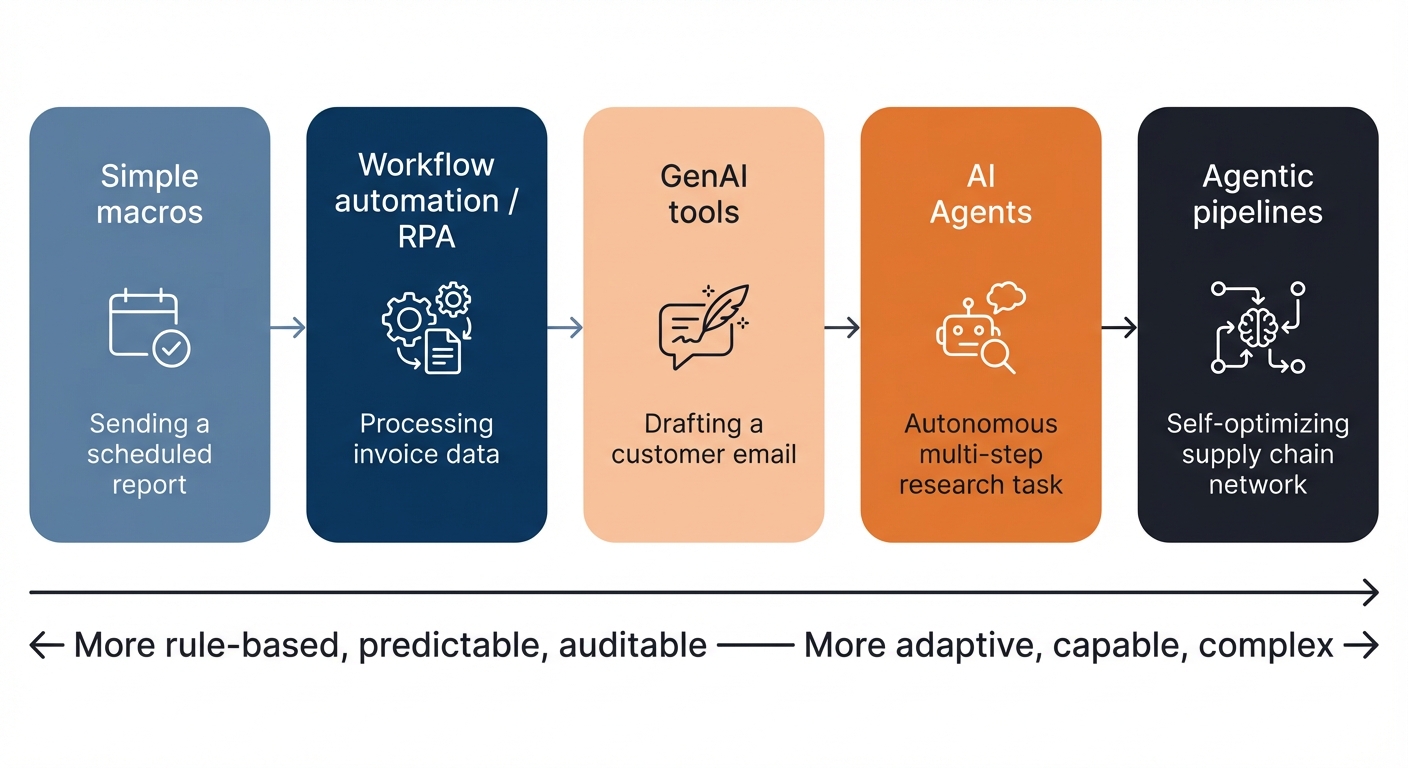

The automation spectrum

These are not rigid separate categories — they sit on a spectrum.

Moving right along the spectrum does not automatically mean better. A task that is perfectly handled by a simple macro does not need a GenAI agent. Choosing the right level of sophistication for the task at hand is one of the core practitioner skills you will develop throughout this programme.

Three more concepts you will encounter

You do not need to be an expert in these yet — but you will encounter them, and it helps to know what they mean.

Fine-tuning — Taking a general LLM and training it further on a specific dataset (for example, your company's internal documents) so it performs better on your domain or style. More resource-intensive than standard deployment, but can significantly improve relevance and accuracy for specialist use cases.

Embeddings — A way of representing meaning numerically so that similar concepts can be found mathematically. Foundational to search and retrieval systems that connect GenAI to specific knowledge bases.

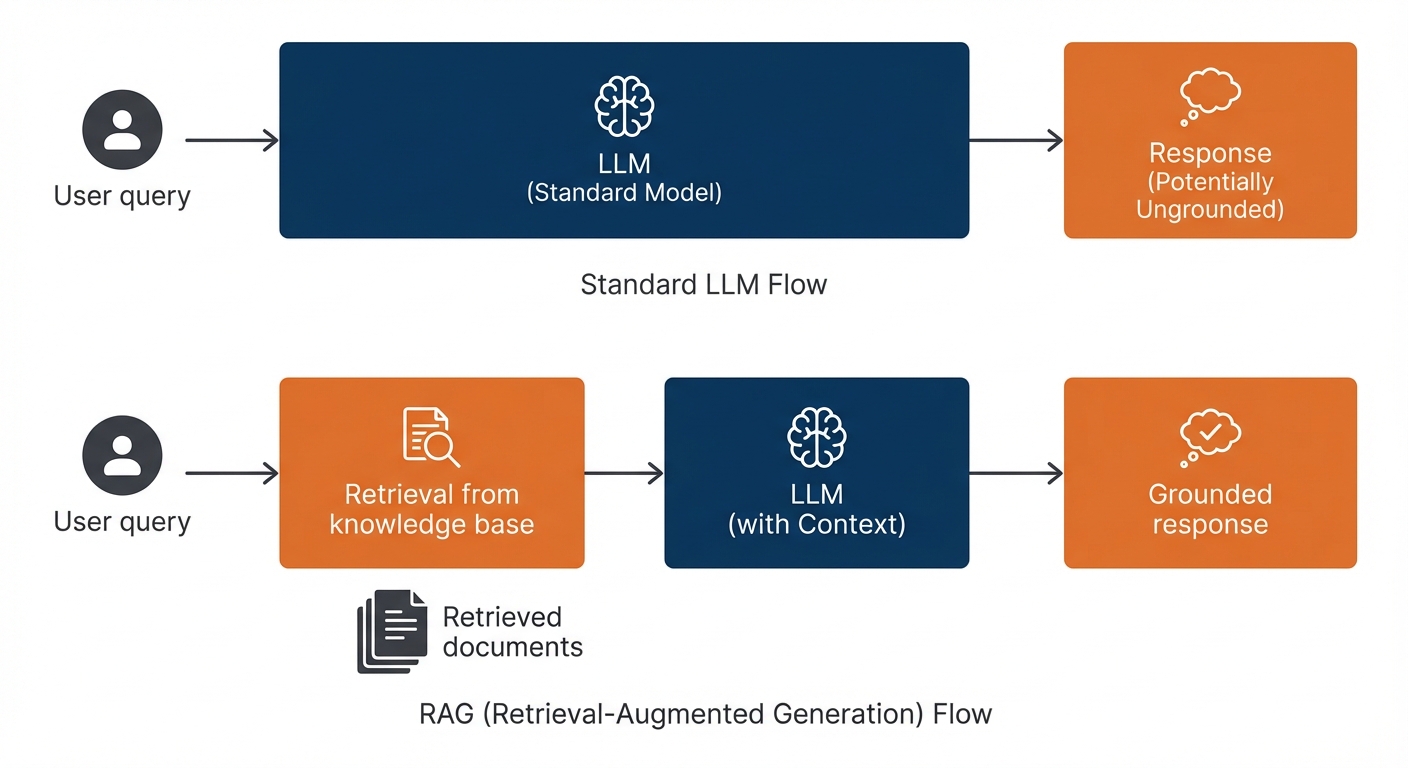

Retrieval-Augmented Generation (RAG) — A technique where a GenAI model is connected to a knowledge base. Rather than relying purely on what it learned during training, it retrieves relevant documents at the moment of your query and uses them to generate a more accurate, grounded response. Very widely used in enterprise AI deployments as a way of reducing hallucination risk.

📝 Activity 1 — The AI Distinction Challenge

Complete before your coaching session | Estimated time: 30 minutes

Read the three workplace scenarios below. For each one, write a short response covering:

(i) Whether it is best served by traditional automation, GenAI, or an AI agent

(ii) One reason why

(iii) One risk or limitation to consider

Record your responses in your Unit 1 Workbook.

Scenario A

Your team receives approximately 200 identical monthly expense claim forms via email. Each form has the same fields every time. Your job is to extract the total amount, employee name, and department from each one and add them to a spreadsheet.

Scenario B

Your manager wants a tool that can respond to internal HR queries about the company's flexible working policy. Employees would type questions in natural language and get answers based on the current policy document.

Scenario C

Your organisation wants to explore whether it is feasible to automatically monitor customer feedback across email, survey responses, and social media — flagging emerging issues by topic and sentiment, and generating a weekly summary report for the leadership team.

There are no single correct answers here. The quality of your reasoning matters more than the conclusion you reach. Your coach will discuss your responses with you in your session.

⏭️ Up next — Lesson 2: Now that you know what these tools are, we look at what they can — and cannot — reliably do in practice. The hallucination problem, the human-in-the-loop principle, and why augmentation beats replacement.